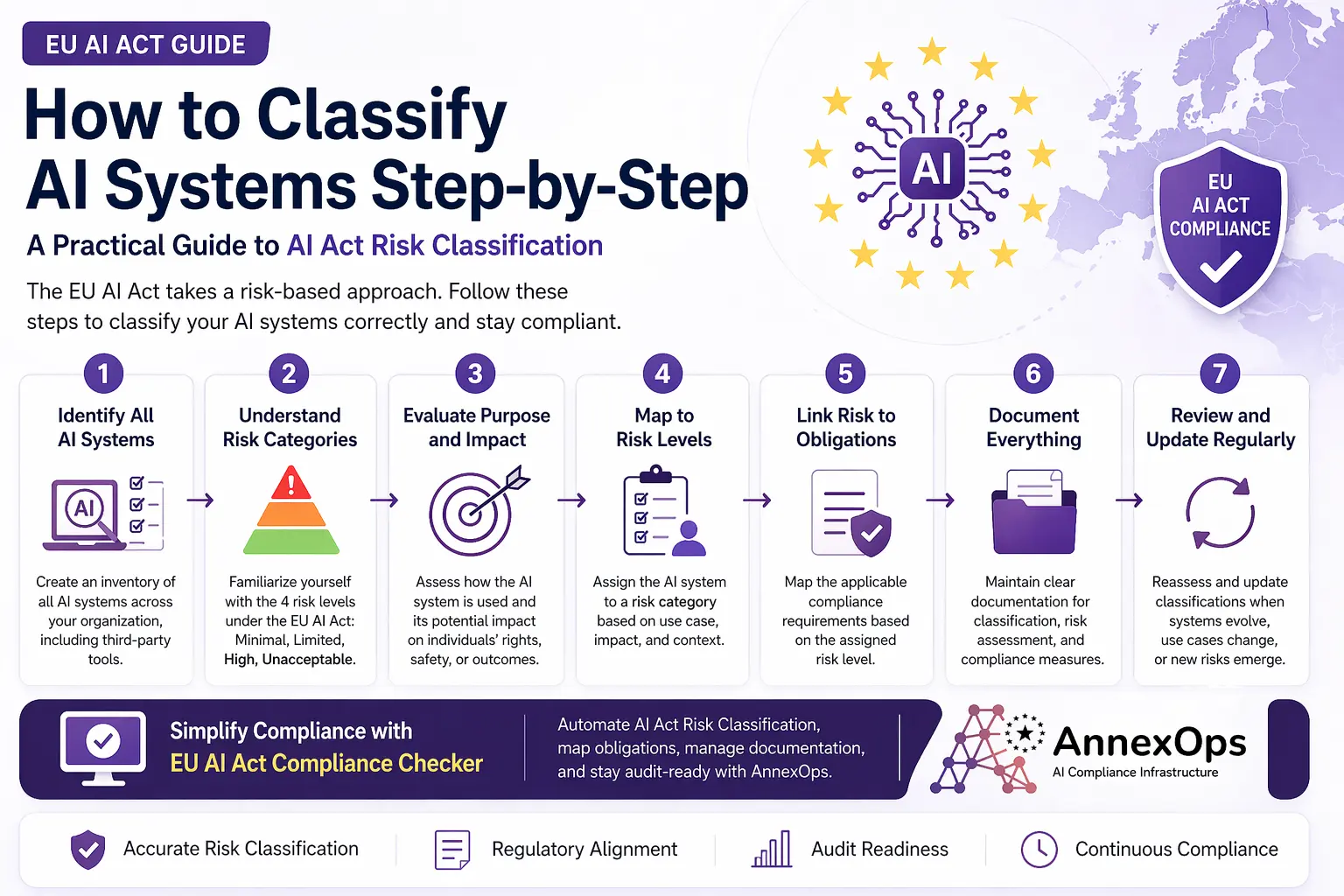

How to Classify AI Systems Under EU AI Act (Step-by-Step Guide)

Understanding how to classify AI systems under EU AI Act is the first—and most critical—step toward compliance. If you get classification wrong, everything that follows—documentation, obligations, audits—can fall apart.

This guide breaks down the process into simple, actionable steps so your team can move from confusion to clarity.

Why Classify AI Systems Under EU AI Act Matters?

The EU AI Act follows a risk-based approach, meaning every AI system is assigned a category based on its potential impact.

Your classification determines:

- What compliance requirements apply

- How much documentation is needed

- Whether your system is even allowed in the EU

In short, AI Act Risk Classification is the foundation of compliance.

Step 1: Identify All AI Systems

Start by creating a clear inventory of AI systems used across your organization.

What to Include:

- Internal AI tools (analytics, automation, decision-making systems)

- Customer-facing AI (chatbots, recommendation engines)

- Third-party AI integrations (APIs, SaaS tools)

Common Mistake:

Many teams only focus on core AI products and ignore embedded or third-party systems.

If an AI system is used in your operations, it must be classified.

Step 2: Understand EU AI Act Risk Categories

Before classifying, you need a clear understanding of the four risk levels:

Minimal Risk

- Examples: spam filters, basic automation

- No strict obligations

Limited Risk

- Requires transparency (e.g., chatbots must disclose AI use)

High Risk

- Used in sensitive areas like:

- Hiring

- Credit scoring

- Healthcare

- Requires strict compliance

Unacceptable Risk

- Prohibited systems (e.g., social scoring)

Most compliance challenges come from misidentifying high-risk AI systems.

Step 3: Evaluate the Purpose and Impact

Classification is not just about technology—it’s about how the AI is used.

Ask Key Questions:

- Does this AI affect human decisions?

- Could it impact rights, safety, or opportunities?

- Is it used in regulated sectors (finance, HR, healthcare)?

Example:

- A chatbot → Limited risk

- An AI hiring tool → High risk

Context matters more than complexity.

Step 4: Map AI Systems to Risk Levels

Now assign each AI system to a risk category based on:

- Use case

- Impact

- Industry context

Best Practice:

Document:

- Why the classification was chosen

- What criteria were used

This becomes critical during audits.

Step 5: Link Risk to EU AI Act Obligations

Once classified, map each system to its compliance requirements.

For High-Risk AI:

- Risk management system

- Data governance processes

- Technical documentation

- Human oversight

- Continuous monitoring

This step connects classification → compliance execution.

Step 6: Document Everything

Under the EU AI Act, documentation is not optional.

You must maintain:

- Classification decisions

- Risk assessments

- System descriptions

- Compliance measures

Why This Matters:

If it’s not documented, regulators will treat it as non-compliant.

Step 7: Review and Update Regularly

AI systems evolve—and so should your classification.

Review When:

- You update models

- Add new features

- Integrate third-party tools

AI compliance is continuous, not one-time.

Why Manual Classification Fails

Many teams try to manage classification using spreadsheets or internal documents.

This leads to:

- Inconsistent classification

- Missing AI systems

- Poor documentation

- Audit risks

As your AI usage grows, manual processes become unmanageable.

Simplify with an EU AI Act Compliance Checker

Instead of handling everything manually, businesses are turning to an EU AI Act Compliance Checker to streamline the process.

A tool-based approach helps you:

- Identify AI systems automatically

- Standardize AI Act Risk Classification

- Map obligations instantly

- Maintain audit-ready documentation

How AnnexOps Helps

AnnexOps provides a structured platform to simplify AI classification and compliance.

With AnnexOps, you can:

- Automate risk classification

- Track compliance requirements

- Manage documentation in one place

- Stay audit-ready at all times

It transforms compliance from a manual burden into a scalable system.

Conclusion

To classify AI systems under the EU AI Act, you need a clear process:

- Identify all AI systems

- Understand risk categories

- Evaluate impact and use cases

- Assign risk levels

- Map obligations

- Document everything

- Continuously update

Getting classification right sets the foundation for full EU AI Act compliance.

If you want to simplify this process, explore an EU AI Act Compliance Checker like AnnexOps and turn complexity into clarity.

Learn how AnnexOps helps AI-driven companies prepare for the EU AI Act with clarity and confidence.

FAQs: Classifying AI Systems Under EU AI Act

1. What does it mean to classify AI systems under the EU AI Act?

It means assigning an AI system to a risk category (minimal, limited, high, or unacceptable) based on its purpose, impact, and use case.

2. Why is AI Act Risk Classification important?

AI Act Risk Classification determines the compliance requirements your AI system must follow, including documentation, governance, and audit obligations.

3. How do I identify AI systems in my organization?

List all systems that use AI, including internal tools, customer-facing applications, and third-party integrations that involve automated decision-making.

4. What are the four risk levels in the EU AI Act?

The EU AI Act defines four levels: minimal risk, limited risk, high risk, and unacceptable risk, each with different compliance requirements.

5. What qualifies as a high-risk AI system?

AI systems used in areas like hiring, credit scoring, healthcare, or critical infrastructure are typically classified as high-risk due to their potential impact.

6. Can the same AI system have different risk classifications?

Yes, the classification can change depending on how the AI system is used and the context in which it operates.

7. What is the first step in AI classification?

The first step is identifying all AI systems used within your organization to ensure nothing is missed during compliance assessment.

8. How often should AI systems be reclassified?

AI systems should be reviewed regularly, especially after updates, new features, or changes in use cases that could affect their risk level.

9. How does an EU AI Act Compliance Checker help?

An EU AI Act Compliance Checker automates the classification process, maps obligations, and ensures consistent, audit-ready documentation.

10. What happens if AI systems are misclassified?

Misclassification can lead to non-compliance, regulatory penalties, and increased risk during audits, especially for high-risk AI systems.

Author: Nitin Grover

Nitin Grover is an AI compliance strategist and writer focused on EU AI Act compliance, AI governance, Annex IV documentation, AI risk management, and AI compliance operations for AI startups, SaaS companies, and enterprise AI teams across Europe.

Nitin Grover

Nitin Grover is a Compliance Manager at AnnexOps, specializing in EU AI Act compliance, AI governance, and risk management. He helps organizations build audit-ready and compliant AI systems across Europe.