AnnexOps Compliance

AI Act Risk Classification: Classify Your AI Systems Easily

The AI Act Risk Classification Engine covers all 8 Annex III use case categories and determines whether your AI system is prohibited, high-risk, limited-risk, or minimal-risk. Versioned rules. Legally defensible output.

✓ All 8 Annex III categories

✓ GPAI detection

✓ Provider vs. Deployer split

How AI Act Risk classification works

Precise, versioned, legally defensible

Guided questionnaire

Versioned rule engine

Provider vs. Deployer split

GPAI detection

Core capabilities

Everything the classification needs

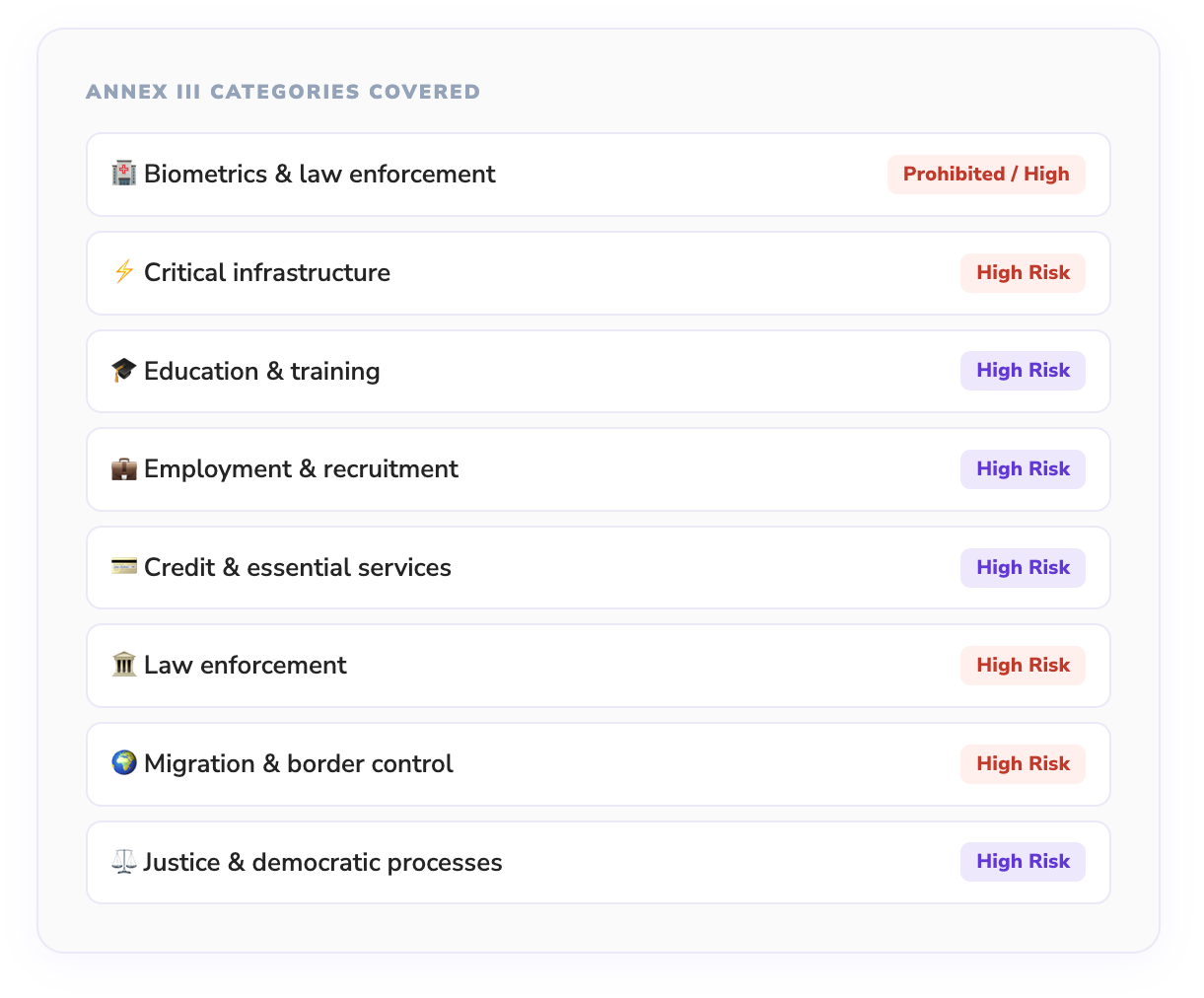

All 8 Annex III categories

Complete coverage of every Annex III use case category including biometrics, critical infrastructure, education, employment, essential services, law enforcement, migration, and justice.

Versioned classification rules

Classification rules are stored as versioned data in the database — never hardcoded. Retroactively check how past classifications would change under new rules.

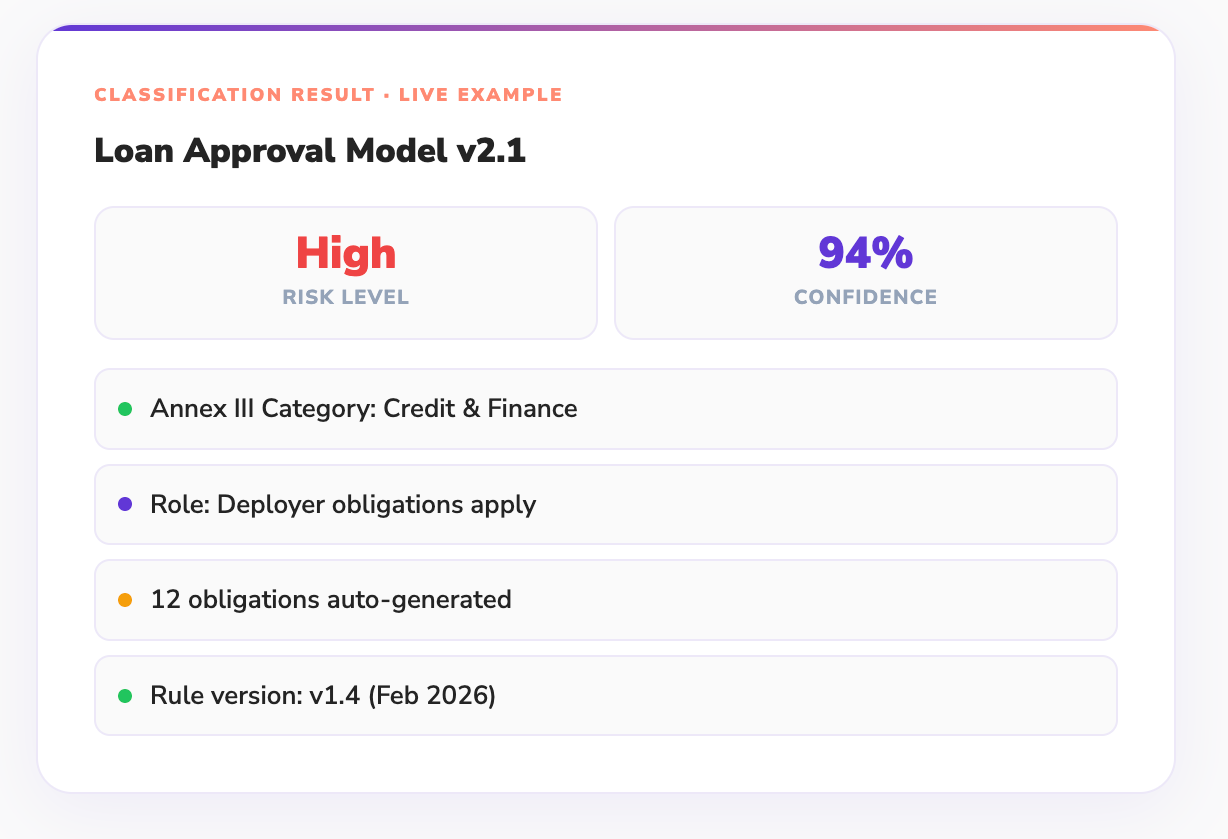

Provider and Deployer determination

The engine analyses your role in the AI value chain to determine whether provider obligations (Article 16), deployer obligations (Article 26), or both apply to you.

GPAI and systemic risk detection

Detects whether your system is a General Purpose AI model under Article 3(63). Flags models above the 10²⁵ FLOPs threshold for additional Article 55 systemic risk obligations.

Guided classification questionnaire

A structured questionnaire walks your team through the classification process step by step. Each answer narrows the Annex III scope until a confident classification is reached.

Shareable classification record

Every completed classification produces a shareable record including the questionnaire responses, rule version, confidence score, and legal reasoning — audit-ready from day one.

Integrations

Works With Your Existing Stack

- 🐙 GitHub Actions

- 🦊 GitLab CI

- 🤗 HuggingFace

- 🧠 Anthropic Claude

- 🌟 Mistral AI

- ☁️ AWS SageMaker

- 📊 Grafana

- 🔴 Jira

- 💼 Linear

- 🔔 Slack

- 🔷 Google Vertex AI

- 🤖 OpenAI API

FAQs

Some Frequently Asked Questions and Their Answers

How does an AI risk classification tool determine whether a system is high-risk under the EU AI Act?

An AI risk classification tool evaluates the real-world impact of the AI system, not just the model type. It analyzes factors such as decision influence, domain of use (e.g., hiring, credit scoring), and user impact. AnnexOps applies structured logic aligned with the EU AI Act to map each system into risk categories like high-risk, limited-risk, or minimal-risk, ensuring consistent and auditable classification.

Why is AI risk classification critical for EU AI Act compliance?

The EU AI Act is built entirely on a risk-based regulatory model. Without accurate classification, organizations cannot determine which compliance obligations apply. Incorrect classification can either lead to over-compliance costs or regulatory violations, making AI risk classification the foundation of any AI compliance strategy.

Can the same AI system be classified differently depending on its use case?

Yes, and this is one of the most important aspects of AI risk classification. The same algorithm can fall into different categories depending on how it is used. For example, a recommendation engine used for content personalization may be low risk, but the same logic used in hiring decisions could be classified as high-risk. AnnexOps evaluates systems based on context and impact, not just model architecture.

How does AnnexOps handle AI risk classification across multiple AI systems in an enterprise?

AnnexOps acts as an AI risk management software for EU AI Act, enabling organizations to classify multiple AI systems across teams and environments. It provides centralized visibility, ensuring that all systems are evaluated using consistent classification rules and regulatory criteria.

News & Articles

Classify your first AI system in 5 minutes

Free tier classifies one system and shows all resulting obligations. No credit card required.